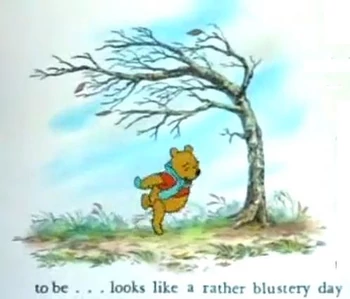

Preparing for "A rather blustery day"

"Oh the wind is lashing lustily

And the trees are thrashing thrustily

And the leaves are rustling gustily

So it's rather safe to say

That it seems that it may turn out to be

Feels that it will undoubtedly

It looks like a rather blustery day, today

It seems that it may turn out to be

Feels that it will undoubtedly

Looks like a rather blustery day, today "

"A Rather Blustery Day" - Winnie the Pooh

Driving around with my two year old in the car watching Winnie the Pooh is a constant. The extreme weather of the last few days seems to fit the song lyrics.

I am sitting here on a snow day with the latest Nor'easter dumping what is estimated at at least a foot of snow. Friday we all went through the carnage of the last Nor'easter with the massive winds. Near my house people still don't have power and we are still driving over downed wires ripped off poles. The internet, TV, and phone still comes and goes. The amount of trees uprooted and knocked down is incredible. The local high school is now open as an emergency place for people to get out of the storm, sleep, get food and a shower.

All of this reminds me about the need to prepare for the worst to protect our data centers and the integrity of the district's data.

Starting with the basics every server should be connected to a UPS with a NIC in it and set for a graceful shutdown in the event of a power failure. I can't tell you how many servers have trashed themselves over the years just because the power went out. This is easy to prevent and doesn't cost much.

Second, get a generator for the data center. However, make sure that you ACTUALLY TEST THE GENERATOR regularly so you know it will work when you need it to work. Again the number of people who have generators, but still had failures is substantial. Bad maintenance is generally the reason. We test weekly during the day so we can actually hear it running.

Regardless of the generator always have your virtual and physical server farms configured for graceful shutdown from the UPS. Many districts have skipped this step because "they have a generator". This is always your plan B.

Next make sure you have good backups. Make sure you have off-site backups. Many BOCES or RICs have excellent backup services. Or you can run Veeam and/or Veeam with a cloud backup option. Some district's do both.

Make sure you have built in redundancy in your virtual servers and SANs. Remember the design premise is that you can add up all the memory required to run all your VMs and then you prove to yourself that you have at least that much memory left when one virtual server host has been removed.

Make sure you have second SAN replicating your data from the first SAN either across the district or back to the BOCES or RIC. so that you have live data "some place else".

Better yet implement a DR virtual server host either across the district or at BOCES or the RIC sitting right next to your redundant SAN. That way if the main data center is inoperable, you can spin up the secondary core servers and do what you need to do to keep the core district data operations functioning.

Make sure you have a temperature sensor in your your main data center. We have had AC failures in data centers due to:

- Electrical outage

- Flooding due to roof repair

- Blizzard conditions burying the air conditioners on the roof.

- Equipment failure.

- Letting your data center overheat will let to hardware failures.

Finally, if you are not yet doing it considering, overlaying CSI's Paladin Sentinel Monitoring to watch over and report on your physical and virtual infrastructure and servers and let you know when your equipment, power, or internet connectivity is in trouble.

If you think that you're disaster planning needs a tune-up, give us a call.

Scott Quimby

You must be logged in to post a comment.